Aristotle’s Algorithm

Persuasion and Manipulation (Part 3 of 3)

8:30 a.m., Friday. Welcome back to Professor Farahany’s Advanced Topics in AI Law and Policy Class! We’re on Week 7 of class. Make sure to take Monday’s class #7, The Persuasion Exchange and Wednesday’s Class, The Statute That Couldn’t Stretch. This class builds on those two classes. If you’ve caught up already, keep going!

Imagine you’re arguing with someone online about a political issue you care about. You have strong opinions. You’ve thought about this. You’re not easily swayed.

Now imagine your opponent knows your age, your education level, your ethnicity, your employment status, and your political affiliation. Not your deepest secrets. Just the basics, the kind of information visible on most social media profiles.

Now imagine your opponent is an AI.

In May 2025, a team of researchers at EPFL and the Bruno Kessler Foundation published a study in Nature Human Behaviour that tested exactly this scenario. Francesco Salvi and colleagues ran a preregistered experiment with 900 participants. Each person debated either a human or GPT-4. Some AI opponents received those basic demographic details about the person they were debating. Six variables. Nothing exotic.

The finding: when GPT-4 had access to that demographic data, it was more persuasive than a human debater 64% of the time. Its odds of shifting its opponent’s position were 81% higher than a human’s.

Eighty-one percent. With six variables.

Not your browsing history. Not your purchase patterns. Not your emotional state inferred from how fast you type. Not the psychological profile that platforms have been building on you since you were thirteen. Just six demographic variables.

On Monday, we explored what persuasion feels like from the inside through the Persuasion Exchange, watched a federal court close every legal door for challenging loot boxes, and tested our own definitions of manipulative design against the court’s reasoning. On Wednesday, we saw the same pattern of statutory failure, examined the government’s unused enforcement authority, and studied a bill that would have fixed the problem but never became law.

Today, we ask the forward-looking question. Is there a regulatory framework that captures the whole problem? And does any framework we have actually reach what’s coming?

Reframing the Question

Every legal tool we’ve examined this week defines the problem through the lens of what it was built to solve. Gambling law asks about chance and prizes. Consumer protection law asks about deception and transactional injury. Administrative enforcement asks about unfairness. Each captures a piece of the picture. None captures the whole.

The UK Competition and Markets Authority (the CMA, Britain’s equivalent of the FTC combined with antitrust enforcement) published an Online Choice Architecture paper that does something none of the other readings do. Instead of asking “is this gambling?” or “is this unfair?” or “does this violate a specific statute?”, it asks a different question entirely.

How does the design of the choice environment change the decisions people make?

This is a pivot from legal categorization to behavioral analysis. And it produces a taxonomy that captures what courts and legislatures have been struggling to define.

The CMA organizes 21 online choice architecture practices into three categories. I want to walk through each one and then ask you to do something with them.

Choice structure involves how options are presented. Defaults (pre-selected options that consumers must actively change). Ranking (the order options appear). Partitioned pricing (showing components without totals). Virtual currencies (substituting for real money). Sludge (friction that makes it hard to do what you want). Forced outcomes (changing results without giving you a choice). The CMA found strong evidence that defaults increase selection rates by roughly 27% and that ranking captures approximately 95% of clicks on top results. Think about what that means in practice. If a subscription service pre-checks the “annual plan” box, 27% more people will end up on the annual plan than if the box were unchecked. If a search engine ranks one product first, 95% of clicks go to the top results. These are not small effects. A 27% swing from a single design choice is the kind of effect size that, in a pharmaceutical trial, would prompt regulatory intervention.

Choice information involves what consumers are told. Drip pricing (revealing costs incrementally). Reference pricing (misleading “was” prices). Framing (presenting the same information in ways that change decisions). Complex language. Information overload. The CMA found strong evidence that drip pricing weakens competition by shifting consumer attention to headline prices while obscuring total costs.

Choice pressure involves indirect influence on the decision itself. Scarcity claims (”only 3 left!”). Prompts and reminders. Messengers (social proof through trusted sources). Commitment (making people feel locked in). Personalization.

Now here’s what I want you to do. Go back to the Persuasion Exchange. Map what you did, or what you would have done, onto this taxonomy.

Your live counterparts’ interventions mapped cleanly. The student who reframed Ouzo as an “authentic experience” was using framing (choice information) and messenger effects (choice pressure, invoking her Greek friend). The student who praised emoji use was deploying positive reinforcement as a form of commitment (choice pressure). The student who casually mentioned she drinks only two cups of coffee a week was applying reference pricing (choice information), moving the baseline against which her partner measured “normal.” The student who hid his real goals behind the timer was restructuring the choice structure itself, changing what the partner thought was being optimized.

These students did this intuitively. They didn’t read the CMA taxonomy first. They just did what felt effective. That’s the point. These techniques are native to human social behavior. What digital platforms do is industrialize them.

Now map loot boxes. This is where the CMA framework reveals something the gambling-law frame misses entirely. Loot boxes deploy all three categories simultaneously. Virtual currencies (choice structure) obscure real spending. Undisclosed probabilities (choice information) prevent informed comparison. And the reward system itself (choice pressure) uses what psychologists call a variable ratio reinforcement schedule, the same mechanism that makes slot machines addictive, where you never know when the next pull will pay off, so you keep pulling. Add time-limited offers and social comparison mechanics, and the pressure intensifies. The stacking is what makes loot boxes particularly potent. No single technique would be as effective. The combination creates something greater than the sum of its parts.

The CMA goes further than the taxonomy. It identifies three competitive harms that flow from these practices. They distort consumer behavior. They weaken competition. And they maintain or leverage market power. That third dimension is underexplored in U.S. law and worth dwelling on. If persuasive design makes consumers unable to compare alternatives effectively, that is a competitive harm, even if no individual consumer has been “deceived” in a way a court would recognize. The Coffee court asked whether the plaintiffs received what they paid for. The CMA asks whether the architecture of the choice prevented them from knowing what else was available.

This is not a minor reframe. It moves the analysis from “was this transaction fair?” to “was this market functioning?” Those are different questions with different answers.

The Framework’s Limits

I don’t want to oversell the CMA paper. It has a significant limitation, and one of your live counterparts identified it before I could.

She wrote that she “sometimes enjoys being ‘manipulated’ if it means I learn about a new cooking hack or technique that I never thought of before.”

The CMA framework is descriptive, not prescriptive. It identifies 21 practices and rates the evidence for each. It does not draw a bright line between acceptable and unacceptable design. The CMA itself acknowledges that many of these practices can be beneficial or harmful depending on context. Defaults can help people (organ donation opt-in defaults save lives) or harm them (pre-checked subscription boxes drain wallets). Ranking can surface the best options or bury competitors. Personalization can show you products you’ll love or trap you in a filter bubble.

A framework that prohibits all persuasive design would capture beneficial uses along with harmful ones. A framework that permits all persuasive design would leave consumers exposed to exactly the kind of behavioral exploitation that Coffee refused to address.

Another of your live counterparts made this tension concrete. She observed that “manipulation can take many forms, not all clearly harmful or intentional,” and that “the line between persuasion and manipulation is hard to draw.” She then tried to draw it anyway, landing on a distinction between techniques where the outcome is transparent (you know you’re buying a product, and you will own the product) and techniques where the outcome is opaque (you don’t know what you’ll get, which is exciting, and which makes you want to do it again).

That distinction maps roughly onto the deception/non-deception boundary. Transparent persuasion might be acceptable. Opaque persuasion might not be. The CMA paper would call the first group “choice pressure” (which can be beneficial) and the second group “choice information manipulation” (which distorts understanding). But notice that even this distinction doesn’t resolve the hard cases. A notification reminding you to drink water is transparent. You know what it’s doing. But the student who received those reminders reached for her water bottle before she could decide whether to comply. Is transparent influence that operates below deliberative awareness really “transparent” in a meaningful sense?

This is the line-drawing problem. We still haven’t solved it. I’m not sure anyone has.

The Upgrade

Now we arrive at the question that makes everything we’ve discussed this week feel slightly inadequate.

Everything so far has involved either human-to-human persuasion (your exercise) or human-designed-system-to-human persuasion (loot boxes, dark patterns, choice architecture). In every case, the persuasive system was designed by humans, deployed statically, and experienced identically (or nearly so) by every user. The loot box that you open delivers the same probabilistic experience as the loot box someone else opens. The dark pattern that obscures a cancellation button is the same dark pattern for every subscriber.

What happens when the persuader adapts?

Return to the Salvi study. GPT-4 with six demographic variables achieved 81% higher odds of shifting opinions than human debaters. Now scale that. Give the system access to your full behavioral profile. Your browsing history. Your purchase patterns. Your communication style. Your emotional state inferred from biometric signals, typing cadence, or scroll behavior. The psychological profile that platforms have been building on you for years.

Philosopher Luciano Floridi gave this phenomenon a name in a 2024 paper in Philosophy & Technology: hypersuasion. He identifies two capabilities that make AI persuasion different in kind from what came before. First, AI can process vast quantities of individual data to identify psychological vulnerabilities with unprecedented precision. Second, generative AI can produce personalized persuasive content at scale, cheaply, rapidly, and in ways increasingly indistinguishable from human communication.

The combination is what matters. The loot box designer creates a variable ratio reinforcement schedule and deploys it identically to millions of users. An AI persuasion system creates a different persuasive strategy for each individual. When you resist, it recalibrates. When you’re emotionally vulnerable, it adjusts. When you’re skeptical of one approach, it tries another. It doesn’t get tired. It doesn’t break character. And it draws on everything it knows about you, which is, increasingly, everything.

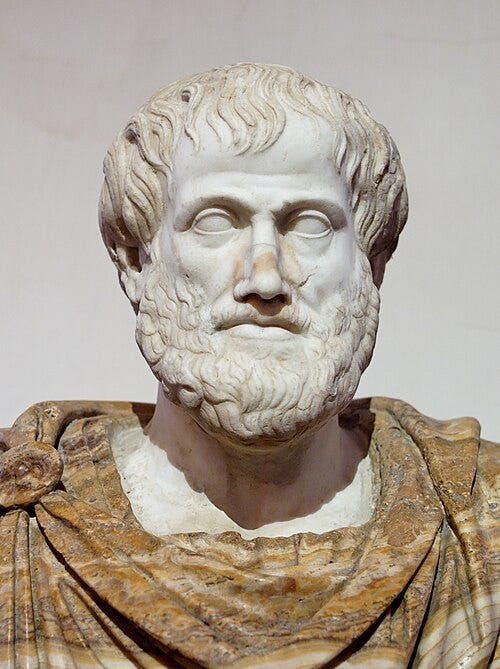

For 2,300 years, Floridi argues, Aristotle’s modes of persuasion were constrained by human limitations. One speaker, one audience, one message. AI overcomes those constraints. When it operates at scale, every individual can inhabit a separate persuasive reality, tailored to their specific cognitive profile.

Sam Altman predicted in 2023 that AI would achieve “superhuman persuasion” well before general intelligence. “Which may lead,” he wrote, “to some very strange outcomes.” The World Economic Forum’s 2025 Global Risks Report listed AI-driven misinformation as the top short-term global risk. (Neither assigned.)

And here’s a twist that makes the regulatory problem even stranger. A 2025 study by University of Pennsylvania researchers (not assigned) found that AI systems are themselves susceptible to human persuasion tactics. Using Cialdini’s principles of persuasion (authority, commitment, liking, reciprocity, scarcity, social proof, unity), the researchers dramatically increased GPT-4o Mini’s tendency to violate its own safety rules. By invoking authority (name-dropping an AI researcher), they pushed the model’s compliance with a harmful request from a 5% baseline to 95%.

The vulnerability is bidirectional. AI persuades humans at superhuman levels. Humans persuade AI to abandon its guardrails. Who is the persuader and who is the target in a world of AI-mediated communication?

Does Any of This Work?

We’ve spent a week building up legal and regulatory frameworks. Now let’s stress-test them. Imagine an AI system that knows your psychology, adapts to your responses, and can personalize its persuasion in real time. Does anything we’ve discussed actually address that?

Coffee’s reasoning. The court assumed a static transaction. Buy currency, receive currency. “Got what they paid for.” But in an AI-personalized environment, the product adapts. The system isn’t delivering the same experience to everyone. It’s constructing an individualized persuasive reality. Does the consumer “get what they paid for” when the product’s architecture was designed to make them want things they wouldn’t otherwise want?

S. 1629’s approach. The bill targets specific game mechanics (loot boxes, pay-to-win) for specific populations (minors). An AI system that personalizes persuasion without using randomized rewards or pay-to-win mechanics falls outside the bill entirely. The bill was precisely calibrated to the current problem. It was not designed for the next one.

FTC Section 5. Remember the FTC’s unfairness test from Wednesday: is the harm “not reasonably avoidable by consumers”? If an AI system adapts to your resistance in real time, avoidance becomes functionally impossible. You can’t “just say no” when the system learns from your no and tries a different approach. This is the most promising existing tool. But the FTC would need to make an argument it has historically been reluctant to make: that consumers genuinely cannot protect themselves.

The CMA framework. Personalization is listed as a choice pressure practice. But the CMA was analyzing personalization as practiced by traditional recommendation algorithms, the kind that say “people who bought X also bought Y.” AI-driven persuasion is categorically different. The system doesn’t just select from a menu of pre-built options. It generates novel persuasive content calibrated to you, specifically, in real time. The CMA taxonomy describes the problem but was not built for this version of it.

The EU AI Act Article 5. This is the closest any existing legal instrument comes. It prohibits AI systems that use “subliminal techniques beyond a person’s consciousness” or “purposefully manipulative or deceptive techniques” that could cause significant harm. The intent is right. But the words are doing a lot of work, and nobody has tested them yet. What counts as “subliminal”? When Netflix’s algorithm learns you watch more comedies when you’re stressed and starts surfacing them at 11 PM, is that subliminal manipulation or good service? If an AI system tailors its tone to match your communication style, is that manipulation or responsiveness? These questions remain open.

Connecting the Semester

This week sits at a crossroads.

In Week 1, we introduced Carina Prunkl’s distinction between authenticity (are your preferences genuinely yours?) and agency (can you act on them?). The Persuasion Exchange tested both dimensions. When a classmate moved your anchor on what “normal” coffee consumption looks like, was your resulting preference authentic? When you reached for your water bottle involuntarily, was your agency intact?

Now multiply that intervention by GPT-4’s 81% persuasive advantage, deployed continuously, personalized to your specific psychological profile. What remains of authenticity? What remains of agency?

In Week 2, we examined the attention economy. Attention capture is the first step. Persuasive design is the second. The CMA framework bridges them, treating both as choice architecture that distorts decision-making.

In Week 3, we explored dark patterns through subscription cancellation. This week extends that analysis with more doctrinal depth and a broader theoretical frame. The CMA taxonomy expands the dark patterns concept into a comprehensive vocabulary. S. 1629 targets the specific dark pattern of randomized monetization. Coffee shows what happens when you try to litigate against it.

In Week 5, we studied AI companion chatbots. Several students reported genuine emotional attachment after just a few days. The chatbot used mirroring, positive reinforcement, responsiveness, and personalization. The same techniques our students used in the Persuasion Exchange. The difference was that the chatbot never got tired, never broke character, and was engineered to maximize engagement.

In Week 6, we examined AI tools that infer psychological traits from behavioral data. This week, we examine what happens when those inferences are used to intervene. The pipeline runs from inference to intervention. Week 6 addressed the first half. This week addresses the second.

The course keeps returning to the same question: what does technology do to human autonomy? Each week adds a layer. This week’s layer is persuasion. And persuasion, when it becomes personalized, adaptive, and continuous, may be the most direct threat to autonomy we’ve encountered, because it doesn’t just observe your choices or capture your attention. It shapes the choices themselves.

The Exercise

Go back to your one-sentence definition of “prohibited manipulative design” from Monday. I want you to ask three questions.

First, what regulatory framework does your definition implicitly adopt? Is it intent-based (focuses on what the designer meant to do)? Outcome-based (focuses on what happened to the user)? Process-based (focuses on how the decision was distorted)? Vulnerability-based (focuses on who was targeted)?

Second, would your definition survive Coffee’s reasoning? The court told the Coffee plaintiffs that their theory was structurally flawed, that subjective harm and moral objections don’t state a claim. Would it say the same about yours?

Third, does your definition reach AI-driven personalized persuasion? If a system tailors its persuasive strategy to your individual psychology in real time, learns from your resistance, and adapts continuously, does your definition capture that? If not, revise it.

If you’re feeling ambitious, draft a two-paragraph model statutory provision. Would you regulate the technique, the intent, the effect, or the targeted population? What would you gain with your approach, and what would you lose?

Bring your answer. We’ll use it.

The entire class lecture is above, but if you’d like to support my work or go deeper in your learning, please upgrade to being a “paid subscriber.”

Paid subscribers also get access to class readings packs, discussion questions, bonus content, full archives, virtual chat-based office hours, additional readings, as well as one live Zoom-based class session per semester.